794 KiB

Unsloth - Llms-Txt

Pages: 136

!pip install huggingface_hub hf_transfer

URL: llms-txt#!pip-install-huggingface_hub-hf_transfer

import os

os.environ["HF_HUB_ENABLE_HF_TRANSFER"] = "1"

from huggingface_hub import snapshot_download

snapshot_download(

repo_id = "unsloth/Llama-4-Scout-17B-16E-Instruct-GGUF",

local_dir = "unsloth/Llama-4-Scout-17B-16E-Instruct-GGUF",

allow_patterns = ["IQ2_XXS"],

)

bash

./llama.cpp/llama-cli

--model unsloth/Llama-4-Scout-17B-16E-Instruct-GGUF/Llama-4-Scout-17B-16E-Instruct-UD-IQ2_XXS.gguf

--threads 32

--ctx-size 16384

--n-gpu-layers 99

-ot ".ffn_.*_exps.=CPU"

--seed 3407

--prio 3

--temp 0.6

--min-p 0.01

--top-p 0.9

-no-cnv

--prompt "<|header_start|>user<|header_end|>\n\nCreate a Flappy Bird game.<|eot|><|header_start|>assistant<|header_end|>\n\n"

{% hint style="success" %}

Read more on running Llama 4 here: <https://docs.unsloth.ai/basics/tutorial-how-to-run-and-fine-tune-llama-4>

{% endhint %}

**Examples:**

Example 1 (unknown):

```unknown

And let's do inference!

{% code overflow="wrap" %}

First uninstall xformers installed by previous libraries

URL: llms-txt#first-uninstall-xformers-installed-by-previous-libraries

pip uninstall xformers -y

(1) Saving to GGUF / merging to 16bit for vLLM

URL: llms-txt#(1)-saving-to-gguf-/-merging-to-16bit-for-vllm

Qwen3-Coder: How to Run Locally

URL: llms-txt#qwen3-coder:-how-to-run-locally

Contents:

- 🖥️ Running Qwen3-Coder

- ⚙️ Recommended Settings

- Run Qwen3-Coder-30B-A3B-Instruct:

Run Qwen3-Coder-30B-A3B-Instruct and 480B-A35B locally with Unsloth Dynamic quants.

Qwen3-Coder is Qwen’s new series of coding agent models, available in 30B (Qwen3-Coder-Flash) and 480B parameters. Qwen3-480B-A35B-Instruct achieves SOTA coding performance rivalling Claude Sonnet-4, GPT-4.1, and Kimi K2, with 61.8% on Aider Polygot and support for 256K (extendable to 1M) token context.

We also uploaded Qwen3-Coder with native 1M context length extended by YaRN and full-precision 8bit and 16bit versions. Unsloth also now supports fine-tuning and RL of Qwen3-Coder.

{% hint style="success" %} UPDATE: We fixed tool-calling for Qwen3-Coder! You can now use tool-calling seamlessly in llama.cpp, Ollama, LMStudio, Open WebUI, Jan etc. This issue was universal and affected all uploads (not just Unsloth), and we've communicated with the Qwen team about our fixes! Read more {% endhint %}

{% hint style="success" %} Does Unsloth Dynamic Quants work? Yes, and very well. In third-party testing on the Aider Polyglot benchmark, the UD-Q4_K_XL (276GB) dynamic quant nearly matched the full bf16 (960GB) Qwen3-coder model, scoring 60.9% vs 61.8%. More details here. {% endhint %}

Qwen3 Coder - Unsloth Dynamic 2.0 GGUFs:

| Dynamic 2.0 GGUF (to run) | 1M Context Dynamic 2.0 GGUF |

|---|---|

🖥️ Running Qwen3-Coder

Below are guides for the 30B-A3B and 480B-A35B variants of the model.

⚙️ Recommended Settings

Qwen recommends these inference settings for both models:

temperature=0.7, top_p=0.8, top_k=20, repetition_penalty=1.05

- Temperature of 0.7

- Top_K of 20

- Min_P of 0.00 (optional, but 0.01 works well, llama.cpp default is 0.1)

- Top_P of 0.8

- Repetition Penalty of 1.05

- Chat template:

{% code overflow="wrap" %}

{% endcode %}

- Recommended context output: 65,536 tokens (can be increased). Details here.

Chat template/prompt format with newlines un-rendered

{% code overflow="wrap" %}

Chat template for tool calling (Getting the current temperature for San Francisco). More details here for how to format tool calls.

{% hint style="info" %}

Reminder that this model supports only non-thinking mode and does not generate <think></think> blocks in its output. Meanwhile, specifying enable_thinking=False is no longer required.

{% endhint %}

Run Qwen3-Coder-30B-A3B-Instruct:

To achieve inference speeds of 6+ tokens per second for our Dynamic 4-bit quant, have at least 18GB of unified memory (combined VRAM and RAM) or 18GB of system RAM alone. As a rule of thumb, your available memory should match or exceed the size of the model you’re using. E.g. the UD_Q8_K_XL quant (full precision), which is 32.5GB, will require at least 33GB of unified memory (VRAM + RAM) or 33GB of RAM for optimal performance.

NOTE: The model can run on less memory than its total size, but this will slow down inference. Maximum memory is only needed for the fastest speeds.

Given that this is a non thinking model, there is no need to set thinking=False and the model does not generate <think> </think> blocks.

{% hint style="info" %} Follow the best practices above. They're the same as the 480B model. {% endhint %}

🦙 Ollama: Run Qwen3-Coder-30B-A3B-Instruct Tutorial

-

Install

ollamaif you haven't already! You can only run models up to 32B in size. -

Run the model! Note you can call

ollama servein another terminal if it fails! We include all our fixes and suggested parameters (temperature etc) inparamsin our Hugging Face upload!

✨ Llama.cpp: Run Qwen3-Coder-30B-A3B-Instruct Tutorial

-

Obtain the latest

llama.cppon GitHub here. You can follow the build instructions below as well. Change-DGGML_CUDA=ONto-DGGML_CUDA=OFFif you don't have a GPU or just want CPU inference. -

You can directly pull from HuggingFace via:

-

Download the model via (after installing

pip install huggingface_hub hf_transfer). You can choose UD_Q4_K_XL or other quantized versions.

Examples:

Example 1 (unknown):

<|im_start|>user

Hey there!<|im_end|>

<|im_start|>assistant

What is 1+1?<|im_end|>

<|im_start|>user

2<|im_end|>

<|im_start|>assistant

Example 2 (unknown):

<|im_start|>user\nHey there!<|im_end|>\n<|im_start|>assistant\nWhat is 1+1?<|im_end|>\n<|im_start|>user\n2<|im_end|>\n<|im_start|>assistant\n

Example 3 (unknown):

<|im_start|>user

What's the temperature in San Francisco now? How about tomorrow?<|im_end|>

<|im_start|>assistant

<tool_call>\n<function=get_current_temperature>\n<parameter=location>\nSan Francisco, CA, USA

</parameter>\n</function>\n</tool_call><|im_end|>

<|im_start|>user

<tool_response>

{"temperature": 26.1, "location": "San Francisco, CA, USA", "unit": "celsius"}

</tool_response>\n<|im_end|>

Example 4 (bash):

apt-get update

apt-get install pciutils -y

curl -fsSL https://ollama.com/install.sh | sh

Ensure all audio is at 24 kHz sampling rate (Orpheus’s expected rate)

URL: llms-txt#ensure-all-audio-is-at-24-khz-sampling-rate-(orpheus’s-expected-rate)

Contents:

- Fine-Tuning TTS with Unsloth

dataset = dataset.cast_column("audio", Audio(sampling_rate=24000))

filename,text 0001.wav,Hello there! 0002.wav, I am very tired. python from datasets import Audio dataset = load_dataset("csv", data_files="mydata.csv", split="train") dataset = dataset.cast_column("filename", Audio(sampling_rate=24000)) python from unsloth import FastLanguageModel import torch dtype = None # None for auto detection. Float16 for Tesla T4, V100, Bfloat16 for Ampere+ load_in_4bit = False # Use 4bit quantization to reduce memory usage. Can be False.

model, tokenizer = FastLanguageModel.from_pretrained( model_name = "unsloth/orpheus-3b-0.1-ft", max_seq_length= 2048, # Choose any for long context! dtype = dtype, load_in_4bit = load_in_4bit, #token = "hf_...", # use one if using gated models like meta-llama/Llama-2-7b-hf )

from datasets import load_dataset dataset = load_dataset("MrDragonFox/Elise", split = "train") python

Examples:

Example 1 (unknown):

This will download the dataset (\~328 MB for \~1.2k samples). Each item in `dataset` is a dictionary with at least:

* `"audio"`: the audio clip (waveform array and metadata like sampling rate), and

* `"text"`: the transcript string

Orpheus supports tags like `<laugh>`, `<chuckle>`, `<sigh>`, `<cough>`, `<sniffle>`, `<groan>`, `<yawn>`, `<gasp>`, etc. For example: `"I missed you <laugh> so much!"`. These tags are enclosed in angle brackets and will be treated as special tokens by the model (they match [Orpheus’s expected tags](https://github.com/canopyai/Orpheus-TTS) like `<laugh>` and `<sigh>`. During training, the model will learn to associate these tags with the corresponding audio patterns. The Elise dataset with tags already has many of these (e.g., 336 occurrences of “laughs”, 156 of “sighs”, etc. as listed in its card). If your dataset lacks such tags but you want to incorporate them, you can manually annotate the transcripts where the audio contains those expressions.

**Option 2: Preparing a custom dataset** – If you have your own audio files and transcripts:

* Organize audio clips (WAV/FLAC files) in a folder.

* Create a CSV or TSV file with columns for file path and transcript. For example:

Example 2 (unknown):

* Use `load_dataset("csv", data_files="mydata.csv", split="train")` to load it. You might need to tell the dataset loader how to handle audio paths. An alternative is using the `datasets.Audio` feature to load audio data on the fly:

Example 3 (unknown):

Then `dataset[i]["audio"]` will contain the audio array.

* **Ensure transcripts are normalized** (no unusual characters that the tokenizer might not know, except the emotion tags if used). Also ensure all audio have a consistent sampling rate (resample them if necessary to the target rate the model expects, e.g. 24kHz for Orpheus).

In summary, for **dataset preparation**:

* You need a **list of (audio, text)** pairs.

* Use the HF `datasets` library to handle loading and optional preprocessing (like resampling).

* Include any **special tags** in the text that you want the model to learn (ensure they are in `<angle_brackets>` format so the model treats them as distinct tokens).

* (Optional) If multi-speaker, you could include a speaker ID token in the text or use a separate speaker embedding approach, but that’s beyond this basic guide (Elise is single-speaker).

### Fine-Tuning TTS with Unsloth

Now, let’s start fine-tuning! We’ll illustrate using Python code (which you can run in a Jupyter notebook, Colab, etc.).

**Step 1: Load the Model and Dataset**

In all our TTS notebooks, we enable LoRA (16-bit) training and disable QLoRA (4-bit) training with: `load_in_4bit = False`. This is so the model can usually learn your dataset better and have higher accuracy.

Example 4 (unknown):

{% hint style="info" %}

If memory is very limited or if dataset is large, you can stream or load in chunks. Here, 3h of audio easily fits in RAM. If using your own dataset CSV, load it similarly.

{% endhint %}

**Step 2: Advanced - Preprocess the data for training (Optional)**

We need to prepare inputs for the Trainer. For text-to-speech, one approach is to train the model in a causal manner: concatenate text and audio token IDs as the target sequence. However, since Orpheus is a decoder-only LLM that outputs audio, we can feed the text as input (context) and have the audio token ids as labels. In practice, Unsloth’s integration might do this automatically if the model’s config identifies it as text-to-speech. If not, we can do something like:

All Our Models

URL: llms-txt#all-our-models

Contents:

- New & recommended models:

- DeepSeek models:

- Llama models:

- Gemma models:

- Qwen models:

- Mistral models:

- Phi models:

- Other (GLM, Orpheus, Smol, Llava etc.) models:

- New models:

- DeepSeek models

Unsloth model catalog for all our Dynamic GGUF, 4-bit, 16-bit models on Hugging Face.

{% tabs %} {% tab title="• GGUF + 4-bit" %} DeepSeekLlamaGemmaQwenMistralPhi

GGUFs let you run models in tools like Ollama, Open WebUI, and llama.cpp.

Instruct (4-bit) safetensors can be used for inference or fine-tuning.

New & recommended models:

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| gpt-oss | 120b | link | link |

| 20b | link | link | |

| DeepSeek-V3.1 | Terminus | link | — |

| V3.1 | link | — | |

| Qwen3-VL | 2B-Instruct | link | link |

| 2B-Thinking | link | link | |

| 4B-Instruct | link | link | |

| 4B-Thinking | link | link | |

| 8B-Instruct | link | link | |

| 8B-Thinking | link | link | |

| 30B-A3B-Instruct | link | — | |

| 30B-A3B-Thinking | link | — | |

| 32B-Instruct | link | link | |

| 32B-Thinking | link | link | |

| 235B-A22B-Instruct | link | — | |

| 235B-A22B-Thinking | link | — | |

| Qwen3-2507 | 30B-A3B-Instruct | link | — |

| 30B-A3B-Thinking | link | — | |

| 235B-A22B-Thinking | link | — | |

| 235B-A22B-Instruct | link | — | |

| Qwen3-Coder | 30B-A3B | link | — |

| 480B-A35B | link | — | |

| Granite-4.0 (new) | H-Small | link | link |

| GLM (new) | 4.6 | link | — |

| 4.5-Air | link | — | |

| Kimi-K2-0905 | 1T | link | — |

| Gemma 3n | E2B | link | link |

| E4B | link | link | |

| DeepSeek-R1-0528 | R1-0528-Qwen3-8B | link | link |

| R1-0528 | link | — | |

| Mistral | Magistral Small (2509) | link | link |

| Magistral Small (2507) | link | link | |

| Small 3.2 24B (2506) | link | link | |

| FLUX.1 | Kontext-dev | link | — |

| Qwen3 | 0.6 B | link | link |

| 1.7 B | link | link | |

| 4 B | link | link | |

| 8 B | link | link | |

| 14 B | link | link | |

| 30B-A3B | link | link | |

| 32 B | link | link | |

| 235B-A22B | link | — | |

| Llama 4 | Scout 17B 16E | link | link |

| Maverick 17B 128E | link | — | |

| Grok 2 | 270B | link | — |

| Qwen-2.5 Omni | 3 B | link | — |

| 7 B | link | — | |

| Phi-4 | Reasoning-plus | link | link |

| Reasoning | link | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| DeepSeek-V3.1 | Terminus | link | |

| V3.1 | link | ||

| DeepSeek-V3 | V3-0324 | link | — |

| V3 | link | — | |

| DeepSeek-R1 | R1-0528 | link | — |

| R1-0528-Qwen3-8B | link | link | |

| R1 | link | — | |

| R1 Zero | link | — | |

| Distill Llama 3 8 B | link | link | |

| Distill Llama 3.3 70 B | link | link | |

| Distill Qwen 2.5 1.5 B | link | link | |

| Distill Qwen 2.5 7 B | link | link | |

| Distill Qwen 2.5 14 B | link | link | |

| Distill Qwen 2.5 32 B | link | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| Llama 4 | Scout 17 B-16 E | link | link |

| Maverick 17 B-128 E | link | — | |

| Llama 3.3 | 70 B | link | link |

| Llama 3.2 | 1 B | link | link |

| 3 B | link | link | |

| 11 B Vision | — | link | |

| 90 B Vision | — | link | |

| Llama 3.1 | 8 B | link | link |

| 70 B | — | link | |

| 405 B | — | link | |

| Llama 3 | 8 B | — | link |

| 70 B | — | link | |

| Llama 2 | 7 B | — | link |

| 13 B | — | link | |

| CodeLlama | 7 B | — | link |

| 13 B | — | link | |

| 34 B | — | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| Gemma 3n | E2B | link | link |

| E4B | link | link | |

| Gemma 3 | 270M | link | link |

| 1 B | link | link | |

| 4 B | link | link | |

| 12 B | link | link | |

| 27 B | link | link | |

| MedGemma | 4 B (vision) | link | link |

| 27 B (vision) | link | link | |

| Gemma 2 | 2 B | link | link |

| 9 B | — | link | |

| 27 B | — | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| Qwen 3 | 0.6 B | link | link |

| 1.7 B | link | link | |

| 4 B | link | link | |

| 8 B | link | link | |

| 14 B | link | link | |

| 30 B-A3B | link | link | |

| 32 B | link | link | |

| 235 B-A22B | link | — | |

| Qwen 2.5 Omni | 3 B | link | — |

| 7 B | link | — | |

| Qwen 2.5 VL | 3 B | link | link |

| 7 B | link | link | |

| 32 B | link | link | |

| 72 B | link | link | |

| Qwen 2.5 | 0.5 B | — | link |

| 1.5 B | — | link | |

| 3 B | — | link | |

| 7 B | — | link | |

| 14 B | — | link | |

| 32 B | — | link | |

| 72 B | — | link | |

| Qwen 2.5 Coder (128 K) | 0.5 B | link | link |

| 1.5 B | link | link | |

| 3 B | link | link | |

| 7 B | link | link | |

| 14 B | link | link | |

| 32 B | link | link | |

| QwQ | 32 B | link | link |

| QVQ (preview) | 72 B | — | link |

| Qwen 2 (chat) | 1.5 B | — | link |

| 7 B | — | link | |

| 72 B | — | link | |

| Qwen 2 VL | 2 B | — | link |

| 7 B | — | link | |

| 72 B | — | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| Mistral Small | 3.2-24 B (2506) | link | link |

| 3.1-24 B (2503) | link | link | |

| 3-24 B (2501) | link | link | |

| Magistral | Small-24 B (2506) | link | link |

| Devstral | Small-24 B (2507) | link | link |

| Small-24 B (2505) | link | link | |

| Pixtral | 12 B (2409) | — | link |

| Mistral Small | 2409-22 B | — | link |

| Mistral NeMo | 12 B (2407) | link | link |

| Mistral Large | 2407 | — | link |

| Mistral 7 B | v0.3 | — | link |

| v0.2 | — | link | |

| Mixtral | 8 × 7 B | — | link |

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| Phi-4 | Reasoning-plus | link | link |

| Reasoning | link | link | |

| Mini-Reasoning | link | link | |

| Phi-4 (instruct) | link | link | |

| mini (instruct) | link | link | |

| Phi-3.5 | mini | — | link |

| Phi-3 | mini | — | link |

| medium | — | link |

Other (GLM, Orpheus, Smol, Llava etc.) models:

| Model | Variant | GGUF | Instruct (4-bit) |

|---|---|---|---|

| GLM | 4.5-Air | link | |

| 4.5 | 4.5 | ||

| 4-32B-0414 | 4-32B-0414 | ||

| Hunyuan | A13B | link | — |

| Orpheus | 0.1-ft (3B) | link | link |

| LLava | 1.5 (7 B) | — | link |

| 1.6 Mistral (7 B) | — | link | |

| TinyLlama | Chat | — | link |

| SmolLM 2 | 135 M | link | link |

| 360 M | link | link | |

| 1.7 B | link | link | |

| Zephyr-SFT | 7 B | — | link |

| Yi | 6 B (v1.5) | — | link |

| 6 B (v1.0) | — | link | |

| 34 B (chat) | — | link | |

| 34 B (base) | — | link | |

| {% endtab %} |

{% tab title="• Instruct 16-bit" %} 16-bit and 8-bit Instruct models are used for inference or fine-tuning:

| Model | Variant | Instruct (16-bit) |

|---|---|---|

| gpt-oss (new) | 20b | link |

| 120b | link | |

| Gemma 3n | E2B | link |

| E4B | link | |

| DeepSeek-R1-0528 | R1-0528-Qwen3-8B | link |

| R1-0528 | link | |

| Mistral | Small 3.2 24B (2506) | link |

| Small 3.1 24B (2503) | link | |

| Small 3.0 24B (2501) | link | |

| Magistral Small (2506) | link | |

| Qwen 3 | 0.6 B | link |

| 1.7 B | link | |

| 4 B | link | |

| 8 B | link | |

| 14 B | link | |

| 30B-A3B | link | |

| 32 B | link | |

| 235B-A22B | link | |

| Llama 4 | Scout 17B-16E | link |

| Maverick 17B-128E | link | |

| Qwen 2.5 Omni | 3 B | link |

| 7 B | link | |

| Phi-4 | Reasoning-plus | link |

| Reasoning | link |

| Model | Variant | Instruct (16-bit) |

|---|---|---|

| DeepSeek-V3 | V3-0324 | link |

| V3 | link | |

| DeepSeek-R1 | R1-0528 | link |

| R1-0528-Qwen3-8B | link | |

| R1 | link | |

| R1 Zero | link | |

| Distill Llama 3 8B | link | |

| Distill Llama 3.3 70B | link | |

| Distill Qwen 2.5 1.5B | link | |

| Distill Qwen 2.5 7B | link | |

| Distill Qwen 2.5 14B | link | |

| Distill Qwen 2.5 32B | link |

| Family | Variant | Instruct (16-bit) |

|---|---|---|

| Llama 4 | Scout 17B-16E | link |

| Maverick 17B-128E | link | |

| Llama 3.3 | 70 B | link |

| Llama 3.2 | 1 B | link |

| 3 B | link | |

| 11 B Vision | link | |

| 90 B Vision | link | |

| Llama 3.1 | 8 B | link |

| 70 B | link | |

| 405 B | link | |

| Llama 3 | 8 B | link |

| 70 B | link | |

| Llama 2 | 7 B | link |

| Model | Variant | Instruct (16-bit) |

|---|---|---|

| Gemma 3n | E2B | link |

| E4B | link | |

| Gemma 3 | 1 B | link |

| 4 B | link | |

| 12 B | link | |

| 27 B | link | |

| Gemma 2 | 2 B | link |

| 9 B | link | |

| 27 B | link |

| Family | Variant | Instruct (16-bit) |

|---|---|---|

| Qwen 3 | 0.6 B | link |

| 1.7 B | link | |

| 4 B | link | |

| 8 B | link | |

| 14 B | link | |

| 30B-A3B | link | |

| 32 B | link | |

| 235B-A22B | link | |

| Qwen 2.5 Omni | 3 B | link |

| 7 B | link | |

| Qwen 2.5 VL | 3 B | link |

| 7 B | link | |

| 32 B | link | |

| 72 B | link | |

| Qwen 2.5 | 0.5 B | link |

| 1.5 B | link | |

| 3 B | link | |

| 7 B | link | |

| 14 B | link | |

| 32 B | link | |

| 72 B | link | |

| Qwen 2.5 Coder 128 K | 0.5 B | link |

| 1.5 B | link | |

| 3 B | link | |

| 7 B | link | |

| 14 B | link | |

| 32 B | link | |

| QwQ | 32 B | link |

| QVQ (preview) | 72 B | — |

| Qwen 2 (Chat) | 1.5 B | link |

| 7 B | link | |

| 72 B | link | |

| Qwen 2 VL | 2 B | link |

| 7 B | link | |

| 72 B | link |

| Model | Variant | Instruct (16-bit) |

|---|---|---|

| Mistral | Small 2409-22B | link |

| Mistral | Large 2407 | link |

| Mistral | 7B v0.3 | link |

| Mistral | 7B v0.2 | link |

| Pixtral | 12B 2409 | link |

| Mixtral | 8×7B | link |

| Mistral NeMo | 12B 2407 | link |

| Devstral | Small 2505 | link |

| Model | Variant | Instruct (16-bit) |

|---|---|---|

| Phi-4 | Reasoning-plus | link |

| Reasoning | link | |

| Phi-4 (core) | link | |

| Mini-Reasoning | link | |

| Mini | link | |

| Phi-3.5 | Mini | link |

| Phi-3 | Mini | link |

| Medium | link |

Text-to-Speech (TTS) models:

| Model | Instruct (16-bit) |

|---|---|

| Orpheus-3B (v0.1 ft) | link |

| Orpheus-3B (v0.1 pt) | link |

| Sesame-CSM 1B | link |

| Whisper Large V3 (STT) | link |

| Llasa-TTS 1B | link |

| Spark-TTS 0.5B | link |

| Oute-TTS 1B | link |

| {% endtab %} |

{% tab title="• Base 4 + 16-bit" %} Base models are usually used for fine-tuning purposes:

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Gemma 3n | E2B | link | link |

| E4B | link | link | |

| Qwen 3 | 0.6 B | link | link |

| 1.7 B | link | link | |

| 4 B | link | link | |

| 8 B | link | link | |

| 14 B | link | link | |

| 30B-A3B | link | link | |

| Llama 4 | Scout 17B 16E | link | link |

| Maverick 17B 128E | link | — |

Llama models:

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Llama 4 | Scout 17B 16E | link | — |

| Maverick 17B 128E | link | — | |

| Llama 3.3 | 70 B | link | — |

| Llama 3.2 | 1 B | link | — |

| 3 B | link | — | |

| 11 B Vision | link | — | |

| 90 B Vision | link | — | |

| Llama 3.1 | 8 B | link | — |

| 70 B | link | — | |

| Llama 3 | 8 B | link | link |

| Llama 2 | 7 B | link | link |

| 13 B | link | link |

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Qwen 3 | 0.6 B | link | link |

| 1.7 B | link | link | |

| 4 B | link | link | |

| 8 B | link | link | |

| 14 B | link | link | |

| 30B-A3B | link | link | |

| Qwen 2.5 | 0.5 B | link | link |

| 1.5 B | link | link | |

| 3 B | link | link | |

| 7 B | link | link | |

| 14 B | link | link | |

| 32 B | link | link | |

| 72 B | link | link | |

| Qwen 2 | 1.5 B | link | link |

| 7 B | link | link |

Llama models:

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Llama 4 | Scout 17B 16E | link | — |

| Maverick 17B 128E | link | — | |

| Llama 3.3 | 70 B | link | — |

| Llama 3.2 | 1 B | link | — |

| 3 B | link | — | |

| 11 B Vision | link | — | |

| 90 B Vision | link | — | |

| Llama 3.1 | 8 B | link | — |

| 70 B | link | — | |

| Llama 3 | 8 B | link | link |

| Llama 2 | 7 B | link | link |

| 13 B | link | link |

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Gemma 3 | 1 B | link | link |

| 4 B | link | link | |

| 12 B | link | link | |

| 27 B | link | link | |

| Gemma 2 | 2 B | link | — |

| 9 B | link | — | |

| 27 B | link | — |

Mistral models:

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| Mistral | Small 24B 2501 | link | — |

| NeMo 12B 2407 | link | — | |

| 7B v0.3 | link | link | |

| 7B v0.2 | link | link | |

| Pixtral 12B 2409 | link | — |

Other (TTS, TinyLlama) models:

| Model | Variant | Base (16-bit) | Base (4-bit) |

|---|---|---|---|

| TinyLlama | 1.1 B (Base) | link | link |

| Orpheus-3b | 0.1-pretrained | link | link |

| {% endtab %} | |||

| {% endtabs %} |

Windows Installation

URL: llms-txt#windows-installation

Contents:

- Method #1 - Docker:

- Method #2 - Windows directly:

- Notes

- Advanced/Troubleshooting

- Method #3 - Windows using PowerShell:

- Method #4 - Windows via WSL:

See how to install Unsloth on Windows with or without WSL.

For Windows, pip install unsloth now works, however you must have Pytorch previously installed.

Method #1 - Docker:

Docker might be the easiest way for Windows users to get started with Unsloth as there is no setup needed or dependency issues. unsloth/unsloth is Unsloth's only Docker image. For Blackwell and 50-series GPUs, use this same image - no separate image needed.

For installation instructions, please follow our Docker guide, otherwise here is a quickstart guide:

{% stepper %} {% step %}

Install Docker and NVIDIA Container Toolkit.

Install Docker via Linux or Desktop (other). Then install NVIDIA Container Toolkit:

export NVIDIA_CONTAINER_TOOLKIT_VERSION=1.17.8-1

sudo apt-get update && sudo apt-get install -y \

nvidia-container-toolkit=${NVIDIA_CONTAINER_TOOLKIT_VERSION} \

nvidia-container-toolkit-base=${NVIDIA_CONTAINER_TOOLKIT_VERSION} \

libnvidia-container-tools=${NVIDIA_CONTAINER_TOOLKIT_VERSION} \

libnvidia-container1=${NVIDIA_CONTAINER_TOOLKIT_VERSION}

Run the container.

unsloth/unsloth is Unsloth's only Docker image.

Access Jupyter Lab

Go to http://localhost:8888 and open Unsloth. Access the unsloth-notebooks tabs to see Unsloth notebooks.

{% endstep %}

Start training with Unsloth

If you're new, follow our step-by-step Fine-tuning Guide, RL Guide or just save/copy any of our premade notebooks. {% endstep %} {% endstepper %}

Method #2 - Windows directly:

{% hint style="info" %} Python 3.13 now works with Unsloth! {% endhint %}

{% stepper %} {% step %} Install NVIDIA Video Driver

You should install the latest version of your GPUs driver. Download drivers here: NVIDIA GPU Drive {% endstep %}

{% step %} Install Visual Studio C++

You will need Visual Studio, with C++ installed. By default, C++ is not installed with Visual Studio, so make sure you select all of the C++ options. Also select options for Windows 10/11 SDK.

- Launch the Installer here: Visual Studio Community Edition

- In the installer, navigate to individual components and select all the options listed here:

- .NET Framework 4.8 SDK

- .NET Framework 4.7.2 targeting pack

- C# and Visual Basic Roslyn compilers

- MSBuild

- MSVC v143 - VS 2022 C++ x64/x86 build tools

- C++ 2022 Redistributable Update

- C++ CMake tools for Windows

- C++/CLI support for v143 build tools (Latest)

- MSBuild support for LLVM (clang-cl) toolset

- C++ Clang Compiler for Windows (19.1.1)

- Windows 11 SDK (10.0.22621.0)

- Windows Universal CRT SDK

- C++ 2022 Redistributable MSMs

Easier method: Or you can open an elevated Command Prompt or PowerShell:

- Search for "cmd" or "PowerShell", right-click it, and choose "Run as administrator."

- Paste and run this command (update the Visual Studio path if necessary):

{% step %} Install Python and CUDA Toolkit

Follow the instructions to install CUDA Toolkit.

Then install Miniconda (which has Python) here: https://www.anaconda.com/docs/getting-started/miniconda/install {% endstep %}

{% step %} Install PyTorch

You will need the correct version of PyTorch that is compatible with your CUDA drivers, so make sure to select them carefully. Install PyTorch {% endstep %}

{% step %} Install Unsloth

Open Conda command prompt or your terminal with Python and run the command:

{% endstep %} {% endstepper %}

{% hint style="warning" %} If you're using GRPO or plan to use vLLM, currently vLLM does not support Windows directly but only via WSL or Linux. {% endhint %}

To run Unsloth directly on Windows:

- Install Triton from this Windows fork and follow the instructions here (be aware that the Windows fork requires PyTorch >= 2.4 and CUDA 12)

- In the SFTTrainer, set

dataset_num_proc=1to avoid a crashing issue:

Advanced/Troubleshooting

For advanced installation instructions or if you see weird errors during installations:

- Install

torchandtriton. Go to https://pytorch.org to install it. For examplepip install torch torchvision torchaudio triton - Confirm if CUDA is installed correctly. Try

nvcc. If that fails, you need to installcudatoolkitor CUDA drivers. - Install

xformersmanually. You can try installingvllmand seeing ifvllmsucceeds. Check ifxformerssucceeded withpython -m xformers.infoGo to https://github.com/facebookresearch/xformers. Another option is to installflash-attnfor Ampere GPUs. - Double check that your versions of Python, CUDA, CUDNN,

torch,triton, andxformersare compatible with one another. The PyTorch Compatibility Matrix may be useful. - Finally, install

bitsandbytesand check it withpython -m bitsandbytes

Method #3 - Windows using PowerShell:

Step 1: Install Prerequisites

- Install NVIDIA CUDA Toolkit:

- Download and install the appropriate version of the NVIDIA CUDA Toolkit from CUDA Downloads.

- Reboot your system after installation if prompted.

- Note: No additional setup is required after installation for Unsloth.

- Install Microsoft C++ Build Tools:

- Download and install Microsoft Build Tools for Visual Studio from the official website.

- During installation, select the C++ build tools workload.

Ensure the MSVC compiler toolset is included.

- Set Environment Variables for the C++ Compiler:

- Open the System Properties window (search for "Environment Variables" in the Start menu).

- Click "Environment Variables…".

- Add or update the following under System variables:

- CC:

Path to thecl.exeC++ compiler.

Example (adjust if your version differs):

- CC:

- CXX:

Same path asCC.- Click OK to save changes.

- Verify: Open a new terminal and type

cl. It should show version info.

- Install Conda

- Download and install Miniconda from the official website

- Follow installation instruction from the website

- To check whether

condais already installed, you can test it withcondain your PowerShell

Step 2: Run the Unsloth Installation Script

-

Download the unsloth_windows.ps1 PowerShell script by going through this link.

-

Open PowerShell as Administrator:

- Right-click Start and select "Windows PowerShell (Admin)".

-

Navigate to the script’s location using

cd: -

Run the script:

Step 3: Using Unsloth

Activate the environment after the installation completes:

Unsloth and its dependencies are now ready!

Method #4 - Windows via WSL:

WSL is Window's subsystem for Linux.

- Install python though Python's official site.

- Start WSL (Should already be preinstalled). Open command prompt as admin then run:

Optional: If WSL is not preinstalled, go to the Microsoft store and search "Ubuntu" and the app that says Ubuntu will be WSL. Install it and run it and continue from there.

-

Optional: Install Jupyter Notebook to run in a Colab like environment:

-

Launch Jupyter Notebook:

jupyter notebook

- Download any Colab notebook from Unsloth, import it into your Jupyter Notebook, adjust the parameters as needed, and execute the script.

Examples:

Example 1 (bash):

docker run -d -e JUPYTER_PASSWORD="mypassword" \

-p 8888:8888 -p 2222:22 \

-v $(pwd)/work:/workspace/work \

--gpus all \

unsloth/unsloth

Example 2 (unknown):

"C:\Program Files (x86)\Microsoft Visual Studio\Installer\vs_installer.exe" modify ^

--installPath "C:\Program Files\Microsoft Visual Studio\2022\Community" ^

--add Microsoft.Net.Component.4.8.SDK ^

--add Microsoft.Net.Component.4.7.2.TargetingPack ^

--add Microsoft.VisualStudio.Component.Roslyn.Compiler ^

--add Microsoft.Component.MSBuild ^

--add Microsoft.VisualStudio.Component.VC.Tools.x86.x64 ^

--add Microsoft.VisualStudio.Component.VC.Redist.14.Latest ^

--add Microsoft.VisualStudio.Component.VC.CMake.Project ^

--add Microsoft.VisualStudio.Component.VC.CLI.Support ^

--add Microsoft.VisualStudio.Component.VC.Llvm.Clang ^

--add Microsoft.VisualStudio.ComponentGroup.ClangCL ^

--add Microsoft.VisualStudio.Component.Windows11SDK.22621 ^

--add Microsoft.VisualStudio.Component.Windows10SDK.19041 ^

--add Microsoft.VisualStudio.Component.UniversalCRT.SDK ^

--add Microsoft.VisualStudio.Component.VC.Redist.MSM

Example 3 (unknown):

pip install "unsloth[windows] @ git+https://github.com/unslothai/unsloth.git"

Example 4 (python):

trainer = SFTTrainer(

dataset_num_proc=1,

...

)

Prepare batched input with your image file

URL: llms-txt#prepare-batched-input-with-your-image-file

image_1 = Image.open("path/to/your/image_1.png").convert("RGB")

image_2 = Image.open("path/to/your/image_2.png").convert("RGB")

prompt = "\nFree OCR."

model_input = [ { "prompt": prompt, "multi_modal_data": {"image": image_1} }, { "prompt": prompt, "multi_modal_data": {"image": image_2} } ]

sampling_param = SamplingParams( temperature=0.0, max_tokens=8192, # ngram logit processor args extra_args=dict( ngram_size=30, window_size=90, whitelist_token_ids={128821, 128822}, # whitelist: , ), skip_special_tokens=False, )

DeepSeek-V3-0324: How to Run Locally

URL: llms-txt#deepseek-v3-0324:-how-to-run-locally

Contents:

- ⚙️ Official Recommended Settings

- 📖 Tutorial: How to Run DeepSeek-V3 in llama.cpp

How to run DeepSeek-V3-0324 locally using our dynamic quants which recovers accuracy

{% hint style="info" %} Please see https://docs.unsloth.ai/basics/deepseek-r1-0528-how-to-run-locally (May 28th 2025 update) to learn on how to run DeepSeek faster and more efficiently! {% endhint %}

DeepSeek is at it again! After releasing V3, R1 Zero and R1 back in December 2024 and January 2025, DeepSeek updated their checkpoints / models for V3, and released a March update!

According to DeepSeek, MMLU-Pro jumped +5.3% to 81.2%. GPQA +9.3% points. AIME + 19.8% and LiveCodeBench + 10.0%! They provided a plot showing how they compared to the previous V3 checkpoint and other models like GPT 4.5 and Claude Sonnet 3.7. But how do we run a 671 billion parameter model locally?

| MoE Bits | Type | Disk Size | Accuracy | Link | Details |

|---|---|---|---|---|---|

| 1.78bit | IQ1_S | 173GB | Ok | Link | 2.06/1.56bit |

| 1.93bit | IQ1_M | 183GB | Fair | Link | 2.5/2.06/1.56 |

| 2.42bit | IQ2_XXS | 203GB | Suggested | Link | 2.5/2.06bit |

| 2.71bit | Q2_K_XL | 231GB | Suggested | Link | 3.5/2.5bit |

| 3.5bit | Q3_K_XL | 320GB | Great | Link | 4.5/3.5bit |

| 4.5bit | Q4_K_XL | 406GB | Best | Link | 5.5/4.5bit |

{% hint style="success" %} DeepSeek V3's original upload is in float8, which takes 715GB. Using Q4_K_M halves the file size to 404GB or so, and our dynamic 1.78bit quant fits in around 151GB. We suggest using our 2.7bit quant to balance size and accuracy! The 2.4bit one also works well! {% endhint %}

⚙️ Official Recommended Settings

According to DeepSeek, these are the recommended settings for inference:

- Temperature of 0.3 (Maybe 0.0 for coding as seen here)

- Min_P of 0.00 (optional, but 0.01 works well, llama.cpp default is 0.1)

- Chat template:

<|User|>Create a simple playable Flappy Bird Game in Python. Place the final game inside of a markdown section.<|Assistant|> - A BOS token of

<|begin▁of▁sentence|>is auto added during tokenization (do NOT add it manually!) - DeepSeek mentioned using a system prompt as well (optional) - it's in Chinese:

该助手为DeepSeek Chat,由深度求索公司创造。\n今天是3月24日,星期一。which translates to:The assistant is DeepSeek Chat, created by DeepSeek.\nToday is Monday, March 24th. - For KV cache quantization, use 8bit, NOT 4bit - we found it to do noticeably worse.

📖 Tutorial: How to Run DeepSeek-V3 in llama.cpp

- Obtain the latest

llama.cppon GitHub here. You can follow the build instructions below as well. Change-DGGML_CUDA=ONto-DGGML_CUDA=OFFif you don't have a GPU or just want CPU inference.

{% hint style="warning" %}

NOTE using -DGGML_CUDA=ON for GPUs might take 5 minutes to compile. CPU only takes 1 minute to compile. You might be interested in llama.cpp's precompiled binaries.

{% endhint %}

- Download the model via (after installing

pip install huggingface_hub hf_transfer). You can chooseUD-IQ1_S(dynamic 1.78bit quant) or other quantized versions likeQ4_K_M. I recommend using our 2.7bit dynamic quantUD-Q2_K_XLto balance size and accuracy. More versions at: https://huggingface.co/unsloth/DeepSeek-V3-0324-GGUF

{% code overflow="wrap" %}

Examples:

Example 1 (bash):

apt-get update

apt-get install pciutils build-essential cmake curl libcurl4-openssl-dev -y

git clone https://github.com/ggml-org/llama.cpp

cmake llama.cpp -B llama.cpp/build \

-DBUILD_SHARED_LIBS=OFF -DGGML_CUDA=ON -DLLAMA_CURL=ON

cmake --build llama.cpp/build --config Release -j --clean-first --target llama-quantize llama-cli llama-gguf-split

cp llama.cpp/build/bin/llama-* llama.cpp

Quantization-Aware Training (QAT)

URL: llms-txt#quantization-aware-training-(qat)

Contents:

- :books:Quantization

- :fire:Smarter Quantization

- :mag:Quantization-Aware Training

- :sparkles:QAT + LoRA finetuning

- :teapot:Exporting QAT models

Quantize models to 4-bit with Unsloth and PyTorch to recover accuracy.

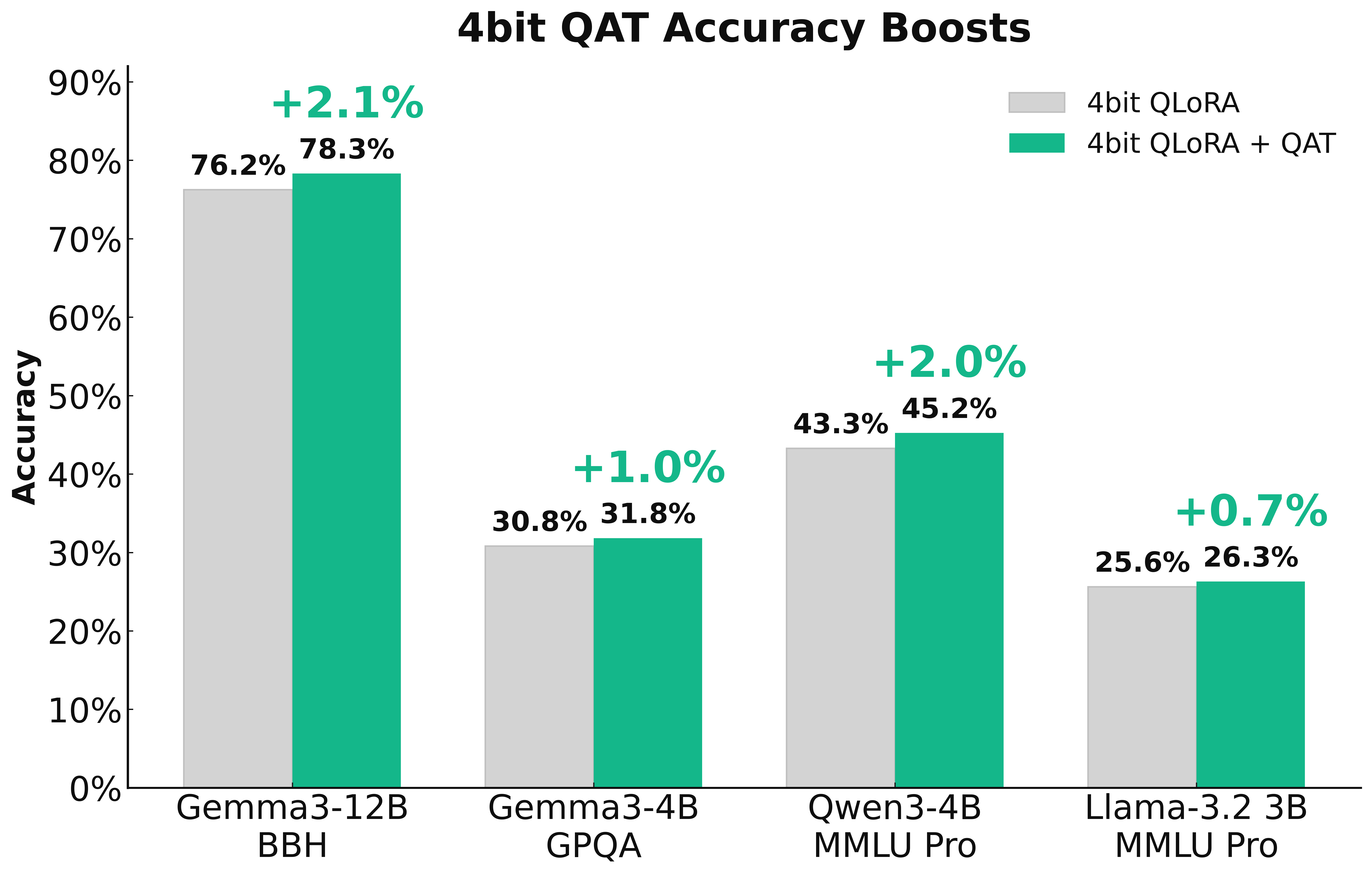

In collaboration with PyTorch, we're introducing QAT (Quantization-Aware Training) in Unsloth to enable trainable quantization that recovers as much accuracy as possible. This results in significantly better model quality compared to standard 4-bit naive quantization. QAT can recover up to 70% of the lost accuracy and achieve a 1–3% model performance improvement on benchmarks such as GPQA and MMLU Pro.

Try QAT with our free Qwen3 (4B) notebook

:books:Quantization

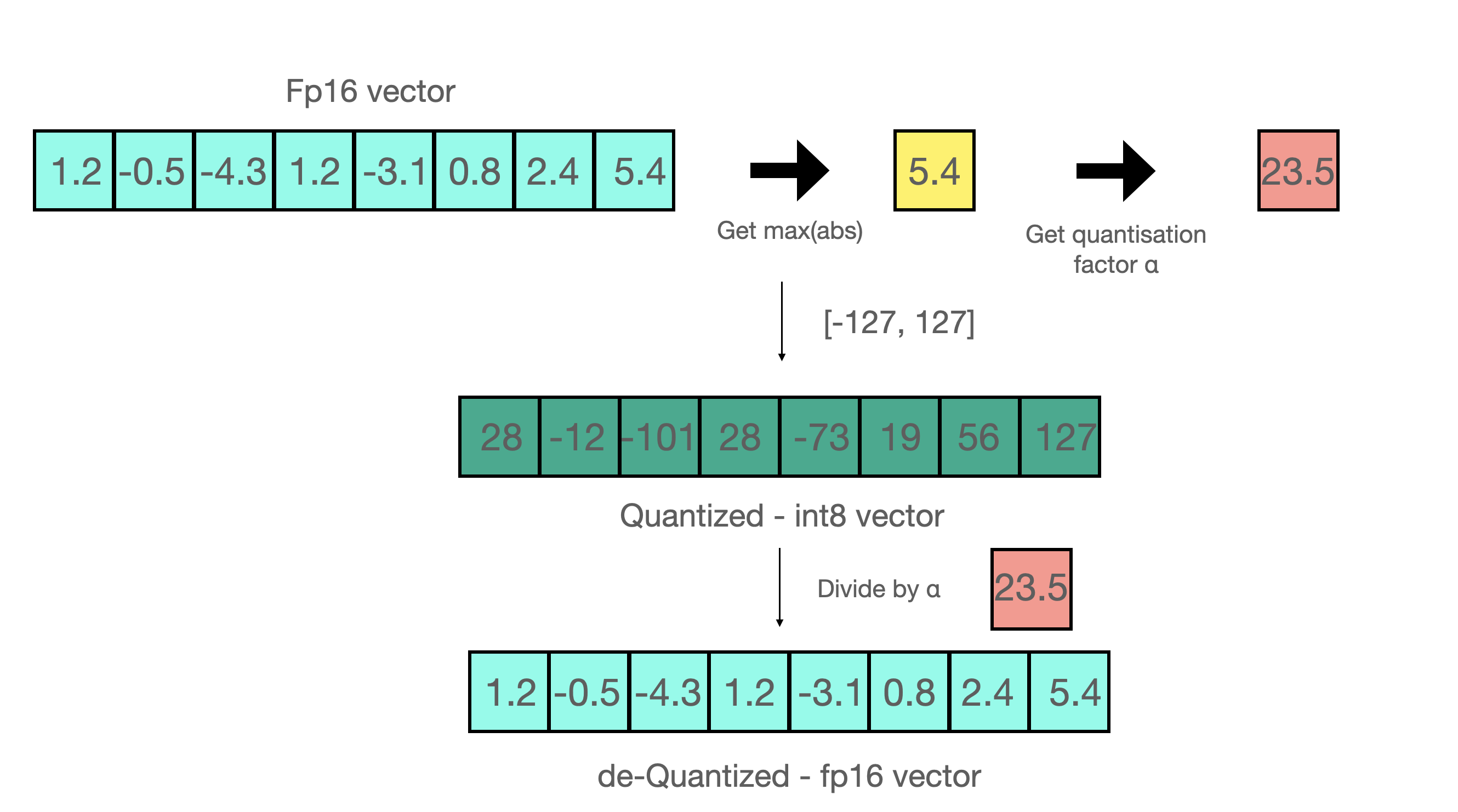

{% columns %} {% column width="50%" %} Naively quantizing a model is called post-training quantization (PTQ). For example, assume we want to quantize to 8bit integers:

- Find

max(abs(W)) - Find

a = 127/max(abs(W))where a is int8's maximum range which is 127 - Quantize via

qW = int8(round(W * a)){% endcolumn %}

{% column width="50%" %}

Dequantizing back to 16bits simply does the reverse operation by float16(qW) / a . Post-training quantization (PTQ) can greatly reduce storage and inference costs, but quite often degrades accuracy when representing high-precision values with fewer bits - especially at 4-bit or lower. One way to solve this to utilize our dynamic GGUF quants, which uses a calibration dataset to change the quantization procedure to allocate more importance to important weights. The other way is to make quantization smarter, by making it trainable or learnable!

:fire:Smarter Quantization

To enable smarter quantization, we collaborated with the TorchAO team to add Quantization-Aware Training (QAT) directly inside of Unsloth - so now you can fine-tune models in Unsloth and then export them to 4-bit QAT format directly with accuracy improvements!

In fact, QAT recovers 66.9% of Gemma3-4B on GPQA, and increasing the raw accuracy by +1.0%. Gemma3-12B on BBH recovers 45.5%, and increased the raw accuracy by +2.1%. QAT has no extra overhead during inference, and uses the same disk and memory usage as normal naive quantization! So you get all the benefits of low-bit quantization, but with much increased accuracy!

:mag:Quantization-Aware Training

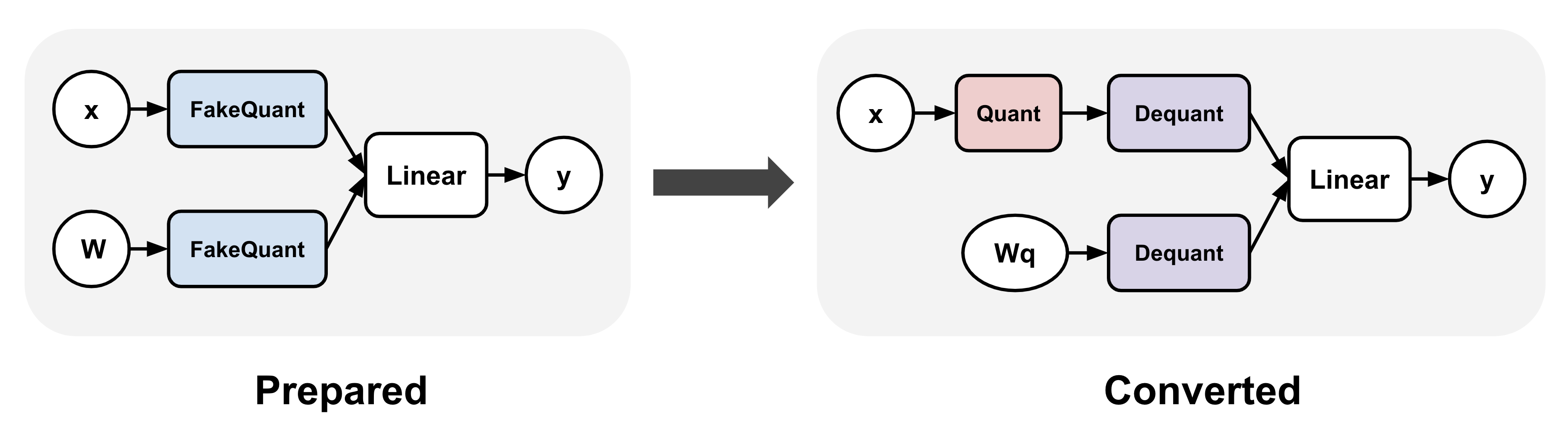

QAT simulates the true quantization procedure by "fake quantizing" weights and optionally activations during training, which typically means rounding high precision values to quantized ones (while staying in high precision dtype, e.g. bfloat16) and then immediately dequantizing them.

TorchAO enables QAT by first (1) inserting fake quantize operations into linear layers, and (2) transforms the fake quantize operations to actual quantize and dequantize operations after training to make it inference ready. Step 1 enables us to train a more accurate quantization representation.

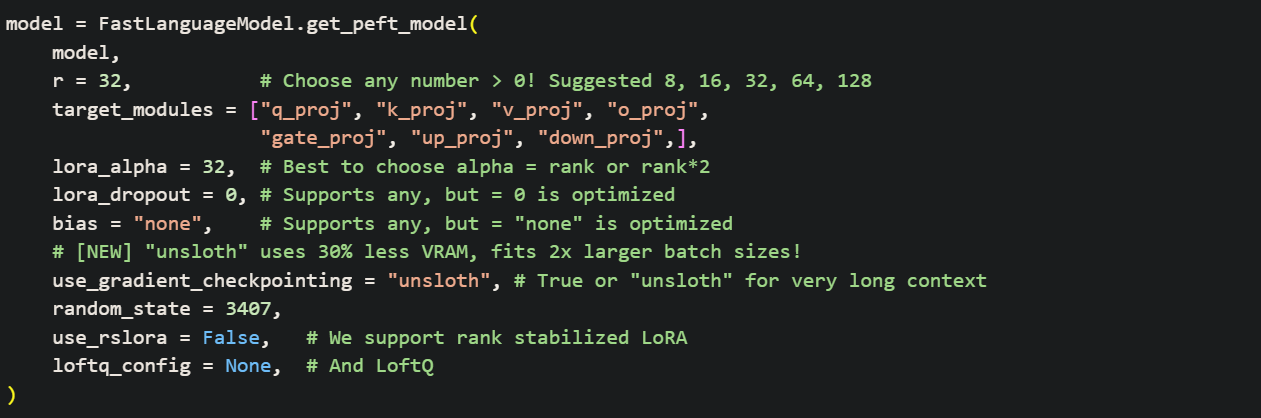

:sparkles:QAT + LoRA finetuning

QAT in Unsloth can additionally be combined with LoRA fine-tuning to enable the benefits of both worlds: significantly reducing storage and compute requirements during training while mitigating quantization degradation! We support multiple methods via qat_scheme including fp8-int4, fp8-fp8, int8-int4, int4 . We also plan to add custom definitions for QAT in a follow up release!

{% code overflow="wrap" %}

:teapot:Exporting QAT models

After fine-tuning in Unsloth, you can call model.save_pretrained_torchao to save your trained model using TorchAO’s PTQ format. You can also upload these to the HuggingFace hub! We support any config, and we plan to make text based methods as well, and to make the process more simpler for everyone! But first, we have to prepare the QAT model for the final conversion step via:

{% code overflow="wrap" %}

And now we can select which QAT style you want:

{% code overflow="wrap" %}

Examples:

Example 1 (python):

from unsloth import FastLanguageModel

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "unsloth/Qwen3-4B-Instruct-2507",

max_seq_length = 2048,

load_in_16bit = True,

)

model = FastLanguageModel.get_peft_model(

model,

r = 16,

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",],

lora_alpha = 32,

# We support fp8-int4, fp8-fp8, int8-int4, int4

qat_scheme = "int4",

)

Example 2 (python):

from torchao.quantization import quantize_

from torchao.quantization.qat import QATConfig

quantize_(model, QATConfig(step = "convert"))

Qwen3-2507

URL: llms-txt#qwen3-2507

Contents:

- ⚙️Best Practices

- 📖 Run Qwen3-30B-A3B-2507 Tutorials

- Instruct: Qwen3-30B-A3B-Instruct-2507

Run Qwen3-30B-A3B-2507 and 235B-A22B Thinking and Instruct versions locally on your device!

Qwen released 2507 (July 2025) updates for their Qwen3 4B, 30B and 235B models, introducing both "thinking" and "non-thinking" variants. The non-thinking 'Qwen3-30B-A3B-Instruct-2507' and 'Qwen3-235B-A22B-Instruct-2507' features a 256K context window, improved instruction following, multilingual capabilities and alignment.

The thinking models 'Qwen3-30B-A3B-Thinking-2507' and 'Qwen3-235B-A22B-Thinking-2507' excel at reasoning, with the 235B achieving SOTA results in logic, math, science, coding, and advanced academic tasks.

Unsloth also now supports fine-tuning and Reinforcement Learning (RL) of Qwen3-2507 models — 2x faster, with 70% less VRAM, and 8x longer context lengths

Run 30B-A3BRun 235B-A22BFine-tune Qwen3-2507

Unsloth Dynamic 2.0 GGUFs:

| Model | GGUFs to run: |

|---|---|

| Qwen3-4B-2507 | Instruct • Thinking |

| Qwen3-30B-A3B-2507 | Instruct • Thinking |

| Qwen3-235B-A22B-2507 | Instruct • Thinking |

{% hint style="success" %}

The settings for the Thinking and Instruct model are different.

The thinking model uses temperature = 0.6, but the instruct model uses temperature = 0.7

The thinking model uses top_p = 0.95, but the instruct model uses top_p = 0.8

{% endhint %}

To achieve optimal performance, Qwen recommends these settings:

| Instruct Model Settings: | Thinking Model Settings: |

|---|---|

Temperature = 0.7 |

Temperature = 0.6 |

Min_P = 0.00 (llama.cpp's default is 0.1) |

Min_P = 0.00 (llama.cpp's default is 0.1) |

Top_P = 0.80 |

Top_P = 0.95 |

TopK = 20 |

TopK = 20 |

presence_penalty = 0.0 to 2.0 (llama.cpp default turns it off, but to reduce repetitions, you can use this) |

presence_penalty = 0.0 to 2.0 (llama.cpp default turns it off, but to reduce repetitions, you can use this) |

Adequate Output Length: Use an output length of 32,768 tokens for most queries, which is adequate for most queries.

Chat template for both Thinking (thinking has <think></think>) and Instruct is below:

📖 Run Qwen3-30B-A3B-2507 Tutorials

Below are guides for the Thinking and Instruct versions of the model.

Instruct: Qwen3-30B-A3B-Instruct-2507

Given that this is a non thinking model, there is no need to set thinking=False and the model does not generate <think> </think> blocks.

⚙️Best Practices

To achieve optimal performance, Qwen recommends the following settings:

- We suggest using

temperature=0.7, top_p=0.8, top_k=20, and min_p=0.0presence_penaltybetween 0 and 2 if the framework supports to reduce endless repetitions. temperature = 0.7top_k = 20min_p = 0.00(llama.cpp's default is 0.1)top_p = 0.80presence_penalty = 0.0 to 2.0(llama.cpp default turns it off, but to reduce repetitions, you can use this) Try 1.0 for example.- Supports up to

262,144context natively but you can set it to32,768tokens for less RAM use

🦙 Ollama: Run Qwen3-30B-A3B-Instruct-2507 Tutorial

-

Install

ollamaif you haven't already! You can only run models up to 32B in size. -

Run the model! Note you can call

ollama servein another terminal if it fails! We include all our fixes and suggested parameters (temperature etc) inparamsin our Hugging Face upload!

✨ Llama.cpp: Run Qwen3-30B-A3B-Instruct-2507 Tutorial

-

Obtain the latest

llama.cppon GitHub here. You can follow the build instructions below as well. Change-DGGML_CUDA=ONto-DGGML_CUDA=OFFif you don't have a GPU or just want CPU inference. -

You can directly pull from HuggingFace via:

-

Download the model via (after installing

pip install huggingface_hub hf_transfer). You can choose UD_Q4_K_XL or other quantized versions.

Examples:

Example 1 (unknown):

<|im_start|>user

Hey there!<|im_end|>

<|im_start|>assistant

What is 1+1?<|im_end|>

<|im_start|>user

2<|im_end|>

<|im_start|>assistant

Example 2 (bash):

apt-get update

apt-get install pciutils -y

curl -fsSL https://ollama.com/install.sh | sh

Example 3 (bash):

ollama run hf.co/unsloth/Qwen3-30B-A3B-Instruct-2507-GGUF:UD-Q4_K_XL

Example 4 (bash):

apt-get update

apt-get install pciutils build-essential cmake curl libcurl4-openssl-dev -y

git clone https://github.com/ggml-org/llama.cpp

cmake llama.cpp -B llama.cpp/build \

-DBUILD_SHARED_LIBS=OFF -DGGML_CUDA=ON -DLLAMA_CURL=ON

cmake --build llama.cpp/build --config Release -j --clean-first --target llama-cli llama-gguf-split

cp llama.cpp/build/bin/llama-* llama.cpp

Constants:

URL: llms-txt#constants:

WIDTH, HEIGHT =456 ,702 # BACKGROUND_COLOR_LIGHTS=['lightskyblue'] GAP_SIZE=189 #

BIRD_RADIUS=3.

PIPE_SPEED=- ( ) ?

class Game():

def init(self):

self.screen_size=( )

def reset_game_vars(): global current_scor e

set to zero and other initial states.

tokenizer.push_to_hub("your_name/lora_model", token = "...") # Online saving

URL: llms-txt#tokenizer.push_to_hub("your_name/lora_model",-token-=-"...")-#-online-saving

Contents:

- Fine-tuning Voice models vs. Zero-shot voice cloning

This saves the model weights (for LoRA, it might save only adapter weights if the base is not fully fine-tuned). If you used --push_model in CLI or trainer.push_to_hub(), you could upload it to Hugging Face Hub directly.

Now you should have a fine-tuned TTS model in the directory. The next step is to test it out and if supported, you can use llama.cpp to convert it into a GGUF file.

Fine-tuning Voice models vs. Zero-shot voice cloning

People say you can clone a voice with just 30 seconds of audio using models like XTTS - no training required. That’s technically true, but it misses the point.

Zero-shot voice cloning, which is also available in models like Orpheus and CSM, is an approximation. It captures the general tone and timbre of a speaker’s voice, but it doesn’t reproduce the full expressive range. You lose details like speaking speed, phrasing, vocal quirks, and the subtleties of prosody - things that give a voice its personality and uniqueness.

If you just want a different voice and are fine with the same delivery patterns, zero-shot is usually good enough. But the speech will still follow the model’s style, not the speaker’s.

For anything more personalized or expressive, you need training with methods like LoRA to truly capture how someone speaks.

Use the public key in docker run

URL: llms-txt#use-the-public-key-in-docker-run

-e "SSH_KEY=$(cat ~/.ssh/container_key.pub)"

Set CUDA environment variables

URL: llms-txt#set-cuda-environment-variables

ENV CUDA_HOME=/usr/local/cuda-13.0/ ENV CUDA_PATH=$CUDA_HOME ENV PATH=$CUDA_HOME/bin:$PATH ENV LD_LIBRARY_PATH=$CUDA_HOME/lib64:$LD_LIBRARY_PATH ENV C_INCLUDE_PATH=$CUDA_HOME/include:$C_INCLUDE_PATH ENV CPLUS_INCLUDE_PATH=$CUDA_HOME/include:$CPLUS_INCLUDE_PATH

Generate SSH key pair

URL: llms-txt#generate-ssh-key-pair

ssh-keygen -t rsa -b 4096 -f ~/.ssh/container_key

LoRA Hot Swapping Guide

URL: llms-txt#lora-hot-swapping-guide

Contents:

- 🍧 vLLM LoRA Hot Swapping / Dynamic LoRAs

🍧 vLLM LoRA Hot Swapping / Dynamic LoRAs

To enable LoRA serving for at most 4 LoRAs at 1 time (these are hot swapped / changed), first set the environment flag to allow hot swapping:

Then, serve it with LoRA support:

To load a LoRA dynamically (set the lora name as well), do:

To remove it from the pool:

Examples:

Example 1 (bash):

export VLLM_ALLOW_RUNTIME_LORA_UPDATING=True

Example 2 (bash):

export VLLM_ALLOW_RUNTIME_LORA_UPDATING=True

vllm serve unsloth/Llama-3.3-70B-Instruct \

--quantization fp8 \

--kv-cache-dtype fp8

--gpu-memory-utilization 0.97 \

--max-model-len 65536 \

--enable-lora \

--max-loras 4 \

--max-lora-rank 64

Example 3 (bash):

curl -X POST http://localhost:8000/v1/load_lora_adapter \

-H "Content-Type: application/json" \

-d '{

"lora_name": "LORA_NAME",

"lora_path": "/path/to/LORA"

}'

Example 4 (bash):

curl -X POST http://localhost:8000/v1/unload_lora_adapter \

-H "Content-Type: application/json" \

-d '{

"lora_name": "LORA_NAME"

}'

What Model Should I Use?

URL: llms-txt#what-model-should-i-use?

Contents:

- Llama, Qwen, Mistral, Phi or?

- Instruct or Base Model?

- Instruct Models

- Base Models

- Should I Choose Instruct or Base?

- Fine-tuning models with Unsloth

- Experimentation is Key

Llama, Qwen, Mistral, Phi or?

When preparing for fine-tuning, one of the first decisions you'll face is selecting the right model. Here's a step-by-step guide to help you choose:

{% stepper %} {% step %}

Choose a model that aligns with your usecase

- E.g. For image-based training, select a vision model such as Llama 3.2 Vision. For code datasets, opt for a specialized model like Qwen Coder 2.5.

- Licensing and Requirements: Different models may have specific licensing terms and system requirements. Be sure to review these carefully to avoid compatibility issues. {% endstep %}

Assess your storage, compute capacity and dataset

- Use our VRAM guideline to determine the VRAM requirements for the model you’re considering.

- Your dataset will reflect the type of model you will use and amount of time it will take to train {% endstep %}

Select a Model and Parameters

- We recommend using the latest model for the best performance and capabilities. For instance, as of January 2025, the leading 70B model is Llama 3.3.

- You can stay up to date by exploring our model catalog to find the newest and relevant options. {% endstep %}

Choose Between Base and Instruct Models

Further details below: {% endstep %} {% endstepper %}

Instruct or Base Model?

When preparing for fine-tuning, one of the first decisions you'll face is whether to use an instruct model or a base model.

Instruct models are pre-trained with built-in instructions, making them ready to use without any fine-tuning. These models, including GGUFs and others commonly available, are optimized for direct usage and respond effectively to prompts right out of the box. Instruct models work with conversational chat templates like ChatML or ShareGPT.

Base models, on the other hand, are the original pre-trained versions without instruction fine-tuning. These are specifically designed for customization through fine-tuning, allowing you to adapt them to your unique needs. Base models are compatible with instruction-style templates like Alpaca or Vicuna, but they generally do not support conversational chat templates out of the box.

Should I Choose Instruct or Base?

The decision often depends on the quantity, quality, and type of your data:

- 1,000+ Rows of Data: If you have a large dataset with over 1,000 rows, it's generally best to fine-tune the base model.

- 300–1,000 Rows of High-Quality Data: With a medium-sized, high-quality dataset, fine-tuning the base or instruct model are both viable options.

- Less than 300 Rows: For smaller datasets, the instruct model is typically the better choice. Fine-tuning the instruct model enables it to align with specific needs while preserving its built-in instructional capabilities. This ensures it can follow general instructions without additional input unless you intend to significantly alter its functionality.

- For information how how big your dataset should be, see here

Fine-tuning models with Unsloth

You can change the model name to whichever model you like by matching it with model's name on Hugging Face e.g. 'unsloth/llama-3.1-8b-unsloth-bnb-4bit'.

We recommend starting with Instruct models, as they allow direct fine-tuning using conversational chat templates (ChatML, ShareGPT etc.) and require less data compared to Base models (which uses Alpaca, Vicuna etc). Learn more about the differences between instruct and base models here.

- Model names ending in

unsloth-bnb-4bitindicate they are Unsloth dynamic 4-bit quants. These models consume slightly more VRAM than standard BitsAndBytes 4-bit models but offer significantly higher accuracy. - If a model name ends with just

bnb-4bit, without "unsloth", it refers to a standard BitsAndBytes 4-bit quantization. - Models with no suffix are in their original 16-bit or 8-bit formats. While they are the original models from the official model creators, we sometimes include important fixes - such as chat template or tokenizer fixes. So it's recommended to use our versions when available.

Experimentation is Key

{% hint style="info" %} We recommend experimenting with both models when possible. Fine-tune each one and evaluate the outputs to see which aligns better with your goals. {% endhint %}

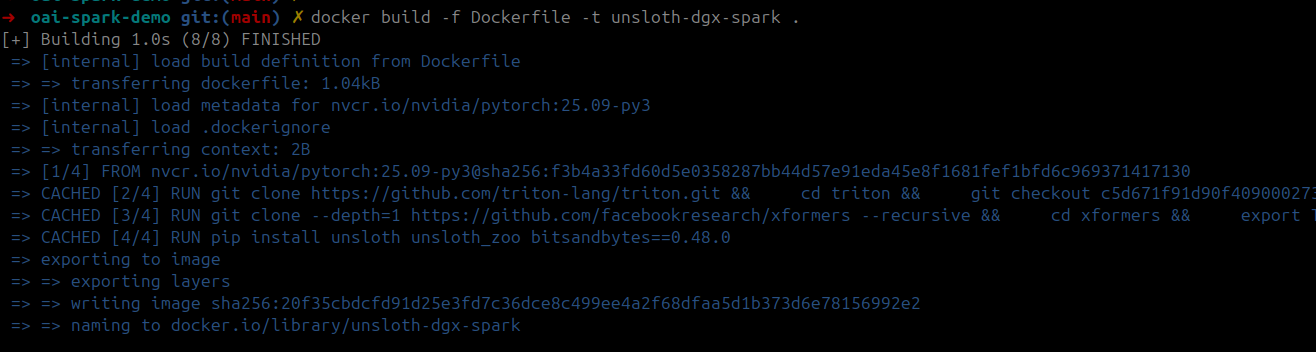

Install unsloth and other dependencies

URL: llms-txt#install-unsloth-and-other-dependencies

RUN pip install unsloth unsloth_zoo bitsandbytes==0.48.0 transformers==4.56.2 trl==0.22.2

Tutorials: How To Fine-tune & Run LLMs

URL: llms-txt#tutorials:-how-to-fine-tune-&-run-llms

Learn how to run and fine-tune models for optimal performance 100% locally with Unsloth.

Create model instance

URL: llms-txt#create-model-instance

llm = LLM( model="unsloth/DeepSeek-OCR", enable_prefix_caching=False, mm_processor_cache_gb=0, logits_processors=[NGramPerReqLogitsProcessor] )

(3) Adding an evaluation loop / OOMs

URL: llms-txt#(3)-adding-an-evaluation-loop-/-ooms

Multi-GPU Training with Unsloth

URL: llms-txt#multi-gpu-training-with-unsloth

Learn how to fine-tune LLMs on multiple GPUs and parallelism with Unsloth.

Unsloth currently supports multi-GPU setups through libraries like Accelerate and DeepSpeed. This means you can already leverage parallelism methods such as FSDP and DDP with Unsloth.

- You can use our Magistral-2509 Kaggle notebook as an example which utilizes multi-GPU Unsloth to fit the 24B parameter model

However, we know that the process can be complex and requires manual setup. We’re working hard to make multi-GPU support much simpler and more user-friendly, and we’ll be announcing official multi-GPU support for Unsloth soon.

In the meantime, to enable multi GPU for DDP, do the following:

- Save your training script to

train.pyand set inSFTConfigorTrainingArgumentsthe flagddp_find_unused_parameters = False - Run

accelerate launch train.pyortorchrun --nproc_per_node N_GPUS -m train.pywhere N_GPUS is the number of GPUs you have.

Pipeline / model splitting loading is also allowed, so if you do not have enough VRAM for 1 GPU to load say Llama 70B, no worries - we will split the model for you on each GPU! To enable this, use the device_map = "balanced" flag:

Also several contributors have created repos to enable or improve multi-GPU support with Unsloth, including:

- unsloth-5090-multiple: A fork enabling Unsloth to run efficiently on multi-GPU systems, particularly for the NVIDIA RTX 5090 and similar setups.

- opensloth: Unsloth with support for multi-GPU training including experimental features.

Stay tuned for our official announcement!

For more details, check out our ongoing Pull Request discussing multi-GPU support.

Examples:

Example 1 (python):

from unsloth import FastLanguageModel

model, tokenizer = FastLanguageModel.from_pretrained(

"unsloth/Llama-3.3-70B-Instruct",

load_in_4bit = True,

device_map = "balanced",

)

(4) Customized chat templates

URL: llms-txt#(4)-customized-chat-templates

Beginner? Start here!

URL: llms-txt#beginner?-start-here!

If you're a beginner, here might be the first questions you'll ask before your first fine-tune. You can also always ask our community by joining our Reddit page.

| fine-tuning-llms-guide | Step-by-step on how to fine-tune! | Learn the core basics of training. | fine-tuning-llms-guide |

| what-model-should-i-use | Instruct or Base Model? | How big should my dataset be? | what-model-should-i-use |

| tutorials-how-to-fine-tune-and-run-llms | How to Run & Fine-tune DeepSeek? | What settings should I set when running Gemma 3? | tutorials-how-to-fine-tune-and-run-llms |

| faq-+-is-fine-tuning-right-for-me | What can fine-tuning do for me? | RAG vs. Fine-tuning? | faq-+-is-fine-tuning-right-for-me |

| install-and-update | How do I install Unsloth locally? | How to update Unsloth? | install-and-update |

| datasets-guide | How do I structure/prepare my dataset? | How do I collect data? | |

| unsloth-requirements | Does Unsloth work on my GPU? | How much VRAM will I need? | unsloth-requirements |

| running-and-saving-models | How do I save my model locally? | How do I run my model via Ollama or vLLM? | running-and-saving-models |

| lora-hyperparameters-guide | What happens when I change a parameter? | What parameters should I change? |

Until v0.11.1 release, you need to install vLLM from nightly build

URL: llms-txt#until-v0.11.1-release,-you-need-to-install-vllm-from-nightly-build

uv pip install -U vllm --pre --extra-index-url https://wheels.vllm.ai/nightly python from vllm import LLM, SamplingParams from vllm.model_executor.models.deepseek_ocr import NGramPerReqLogitsProcessor from PIL import Image

Examples:

Example 1 (unknown):

2. Then run the following code:

{% code overflow="wrap" %}

Finetuning from Last Checkpoint

URL: llms-txt#finetuning-from-last-checkpoint

Contents:

- Wandb Integration

Checkpointing allows you to save your finetuning progress so you can pause it and then continue.

You must edit the Trainer first to add save_strategy and save_steps. Below saves a checkpoint every 50 steps to the folder outputs.

Then in the trainer do:

Which will start from the latest checkpoint and continue training.

Wandb Integration

Examples:

Example 1 (python):

trainer = SFTTrainer(

....

args = TrainingArguments(

....

output_dir = "outputs",

save_strategy = "steps",

save_steps = 50,

),

)

Example 2 (python):

trainer_stats = trainer.train(resume_from_checkpoint = True)

import os # Optional for faster downloading

URL: llms-txt#import-os-#-optional-for-faster-downloading

Unsloth Inference

URL: llms-txt#unsloth-inference

Learn how to run your finetuned model with Unsloth's faster inference.

Unsloth supports natively 2x faster inference. For our inference only notebook, click here.

All QLoRA, LoRA and non LoRA inference paths are 2x faster. This requires no change of code or any new dependencies.

from unsloth import FastLanguageModel

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "lora_model", # YOUR MODEL YOU USED FOR TRAINING

max_seq_length = max_seq_length,

dtype = dtype,

load_in_4bit = load_in_4bit,

)

FastLanguageModel.for_inference(model) # Enable native 2x faster inference

text_streamer = TextStreamer(tokenizer)

_ = model.generate(**inputs, streamer = text_streamer, max_new_tokens = 64)

NotImplementedError: A UTF-8 locale is required. Got ANSI

Sometimes when you execute a cell this error can appear. To solve this, in a new cell, run the below:

Examples:

Example 1 (python):

import locale

locale.getpreferredencoding = lambda: "UTF-8"

DeepSeek-R1: How to Run Locally

URL: llms-txt#deepseek-r1:-how-to-run-locally

Contents:

- Using llama.cpp (recommended)

A guide on how you can run our 1.58-bit Dynamic Quants for DeepSeek-R1 using llama.cpp.

{% hint style="success" %} Please see https://docs.unsloth.ai/basics/deepseek-r1-0528-how-to-run-locally for an updated DeepSeek R1-0528 (May 28th 2025 version) {% endhint %}

Using llama.cpp (recommended)

-

Do not forget about

<|User|>and<|Assistant|>tokens! - Or use a chat template formatter -

Obtain the latest

llama.cppat: github.com/ggerganov/llama.cpp. You can follow the build instructions below as well: -

It's best to use

--min-p 0.05to counteract very rare token predictions - I found this to work well especially for the 1.58bit model. -

Download the model via:

Examples:

Example 1 (bash):

apt-get update

apt-get install pciutils build-essential cmake curl libcurl4-openssl-dev -y

git clone https://github.com/ggerganov/llama.cpp

cmake llama.cpp -B llama.cpp/build \

-DBUILD_SHARED_LIBS=ON -DGGML_CUDA=ON -DLLAMA_CURL=ON

cmake --build llama.cpp/build --config Release -j --clean-first --target llama-quantize llama-cli llama-gguf-split

cp llama.cpp/build/bin/llama-* llama.cpp

Memory Efficient RL

URL: llms-txt#memory-efficient-rl

Contents:

- :sparkles:How to enable optimizations

- :mortar_board:No more

gpu_memory_utilization! - :interrobang:Why does RL use so much memory?

- 🦥Unsloth Standby

- 🧪Performance Experiments

- H100 Experiments

- Previous A100 40GB experiments

- :tada:Other optimizations

- :books:GRPO Notebooks

We're excited to introduce more efficient reinforcement learning (RL) in Unsloth with multiple algorithmic advancements:

- 1.2 to 1.7x increased context lengths with no slowdown and no extra memory usage!

- 10% faster RL training runs with revamped kernels and async data movements

- 2x faster

torch.compiletimes during model loading

Unsloth already increases RL training speed, context window and reduces VRAM usage by 50–90% vs. all other setups with FA2, but now Unsloth's Standby improves this even further. Our Standby feature uniquely limits speed degradation compared to other implementations and sometimes makes training even faster!

Now, Qwen3-32B LoRA 16-bit can attain 6,144 context lengths vs 3,600 (1.7x longer) before on 1xH100 80GB GPU. Llama-3.1-8B QLoRA 4bit can attain 47,500 lengths vs 42,000 before (1.13x longer).

We made RL runs 10% faster through various kernel optimizations, and removed the LoRA communication channel between the CPU and GPU when switching from training to inference mode. Finally, we used custom torch.compile flags to make vLLM's rollout faster by 10%, and reduced compilation time by 2x.

:sparkles:How to enable optimizations

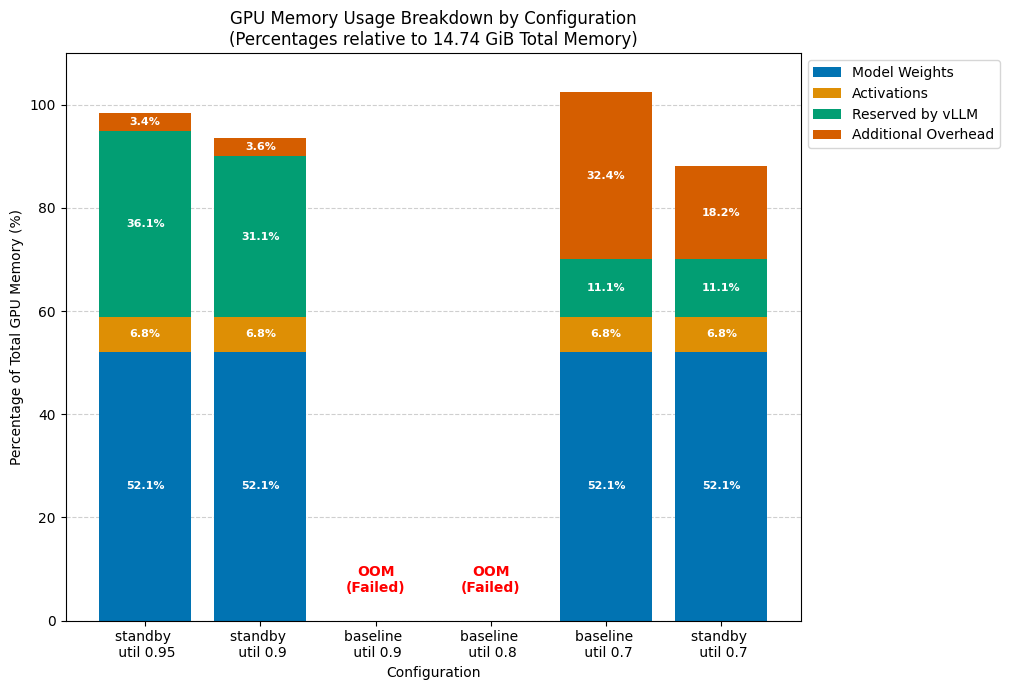

To enable Unsloth's Standby feature, set the environment variable UNSLOTH_VLLM_STANDBY before any Unsloth import. Then set gpu_memory_utilization = 0.95 and that's it!

:mortar_board:No more gpu_memory_utilization!

With Unsloth's new RL improvements, you NEVER have to worry about tuning or setting gpu_memory_utilization ever again - simply set it to 90% or 95% of GPU utilization - 100% sadly won't work since some space is needed for small tensors. Previously one had to tune it from 30% to 95% - no more now! Set it to the maximum and Unsloth will handle the rest!

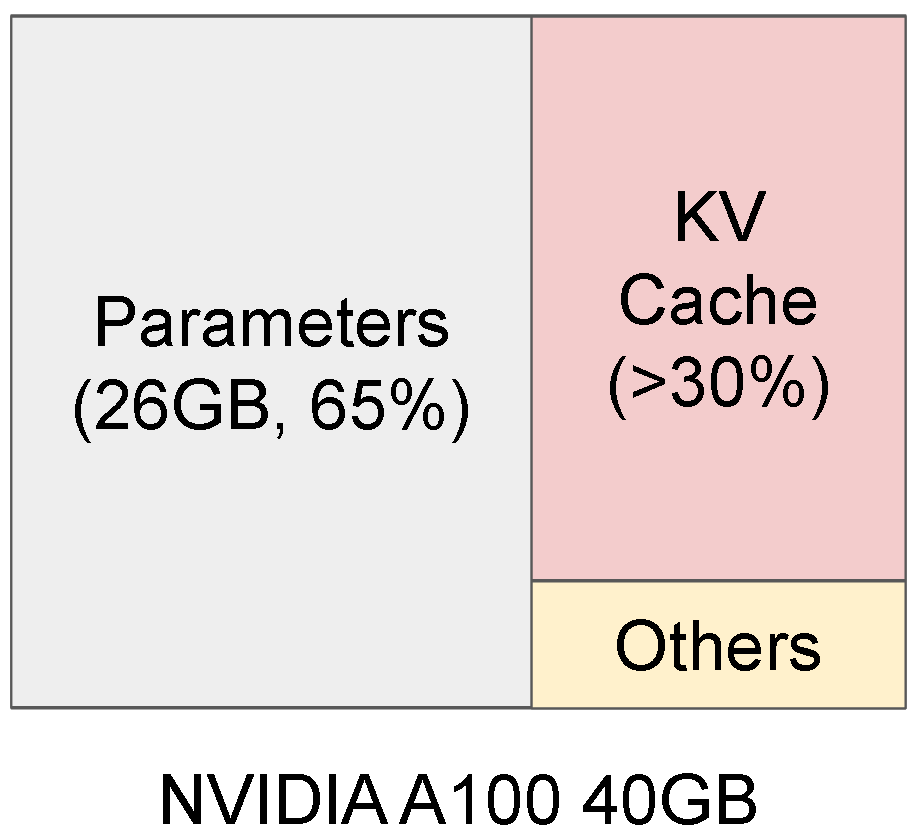

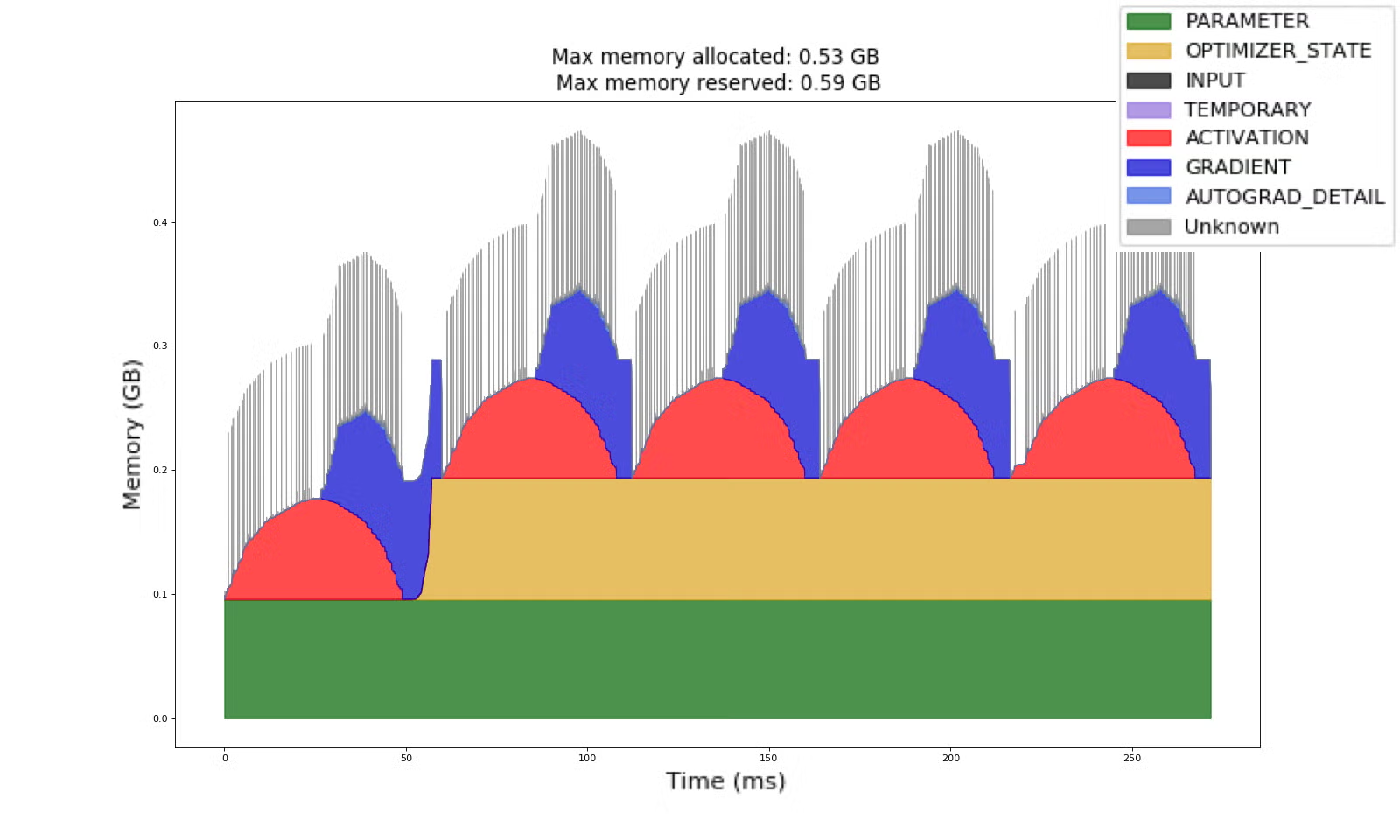

:interrobang:Why does RL use so much memory?

GRPO (and many RL variants) rely heavily on generation which is primarily powered by vLLM. But this comes comes with a steep cost since it requires constant GPU memory for weights, activations, and the KV Cache.

{% columns %} {% column width="41.66666666666667%" %} Inference takes a lot of VRAM

{% column width="58.33333333333333%" %} Whilst Training also uses VRAM!

This means RL needs to keep 2 sets of VRAM / memory on the GPU at the same time:

- Inference engine (has model weights, KV cache)

- Training engine (has model weights, activations, gradients, optimizer states)

Current RL frameworks have to split 50/50 for a 80GB GPU with 50% for inference and 50% for training. And moving weights from training mode to inference mode can take quite some time.

| 80GB GPU | Inference Engine (50%) | Training Engine (50%) |

|---|---|---|

| Model Weights | 16GB | 16GB |

| KV Cache | 24GB | |

| Activations, Gradients, Optimizer States | 24GB |

Previous Unsloth versions already smartly optimizes the above, as we share vLLM's weight space directly which removes the double memory usage of the model weights. This frees up 16GB of space for example which can be used to increase context length or the speed of generation. Also, we don't need to do memory movements, which makes training faster.

| 80GB GPU | Inference Engine (50%) | Training Engine (50%) |

|---|---|---|

| Model Weights | 16GB SHARED | <<< SHARED |

| KV Cache | 24GB + 8GB= 32GB | |

| Activations, Gradients, Optimizer States | 24GB + 8GB=32GB |

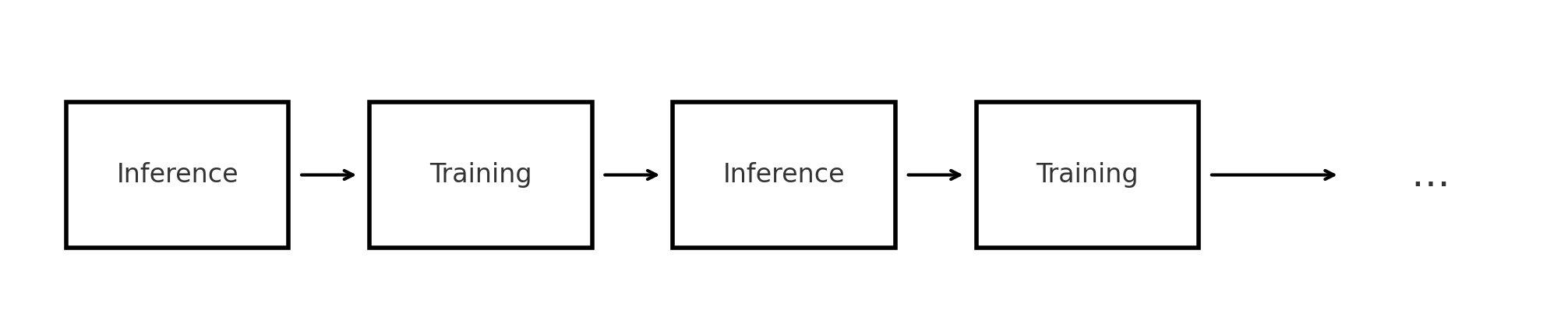

But we can go further - we first note RL does inference then training then inference then training etc.

This means the memory space for inference and training can in theory be re-used, since inference and training are separate modes - this is where vLLM's sleep mode feature comes in, which has 2 options:

level = 1copies weights to the CPU and deletes KV cachelevel = 2deletes weights and deletes KV cache

But reminder in Unsloth we share vLLM's memory space for the weights - this means we need a new way to delete the KV cache, and ignore deletion of the weights, and we call this Unsloth Standby.

| 80GB GPU | Inference Engine | Training Engine |

|---|---|---|

| Model Weights | 16GB SHARED | <<< SHARED |

Multi-purpose 64GB space |

KV Cache | Activations, Gradients, Optimizer States |

To enable this, simply add the below to all RL / GRPO training runs before any Unsloth import:

🧪Performance Experiments

Here you will find out how we benchmarked memory usage and context length for GRPO. Note that we do 2 generations per prompt because for GRPO to work, we need at least 2 generations for which to calculate the sample mean and variance. Without 2 generations, the standard deviation of one sample is 0. This causes the advantages which uses this: (reward - mean)/std to be undefined.

Z=\frac{r\_i - \mu}{\sqrt{\frac{1}{n}\sum(r\_i-\mu)^2}} \\

Z\_{n=1}=\frac{r\_1 - \mu}{\sqrt{\frac{1}{1}\sum(r\_1-\mu)^2}}=\frac{0}{0}=\text{undefined}

This means for GRPO specifically, a maximum context length of 6,144 for Qwen-3 32B is actually 6,144 multiplied by 2 generations ie 12,288 in length.

We provide experiments for Llama-3.1 8B on both LoRA (16bit) and QLoRA (4bit) below:

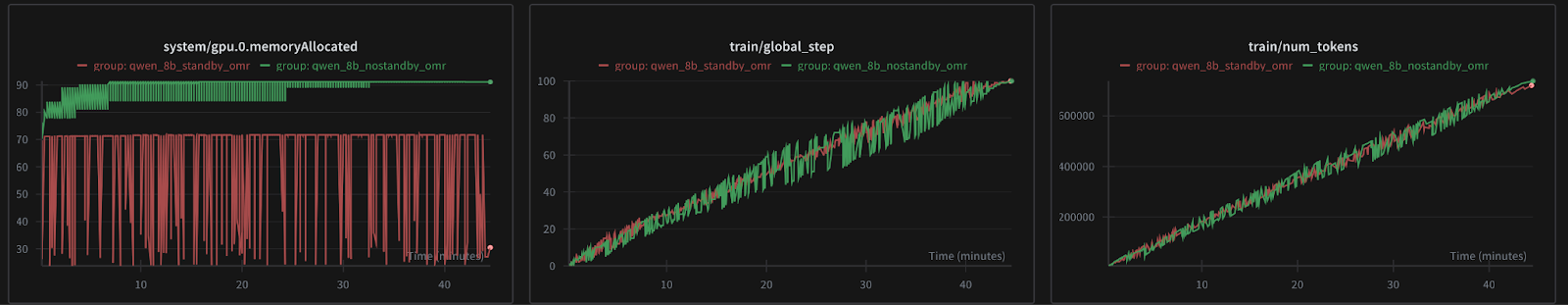

.png?alt=media&token=10f33092-137a-4d60-b652-377b5105af45)

If you notice any training time differences, it isn’t much. In our apples to apples comparison we noticed <1% training time slowdowns or even speedups which can be attributed to margin of error.

We also theorize speedups are possible due to reduced memory pressure, so there might be less memory cleanup on the CUDA memory allocator side.

In the above image, you see the difference between baseline and standby mode on a single T4 GPU for Qwen 3 4B. We can stretch the vllm's gpu_memory_utilisation to as high as 0.95 without worrying that it'd affect training. This means you can fit higher context length sequences and more sequences can be processed. In the first case, for example, we have enough memory to fit and process 32K length sequences provided training allows where as previously, any inputs longer than 2K would potentially not fit in and end up causing OOMs (out of memory).

| Experiments | Config | Status | GPU Memory usage | Comments |

|---|---|---|---|---|

| Runs for 40 steps/ 40 minutes | 14.5 GiB (set by vllm_gpu_util) | Enough to fit in 32K KVCache with chunk of 2-4K or say 16K KVCache + 16K chunks | |

| Runs 32 steps in 40 m | 13.8 GiB (set by…) | Approx enough to fit in ~28K KVCache with chunk of 2-4K or say 15K KVCache + 15K chunks | |

| model loads but can’t train because even batch size of 1 doesn’t fit | OOM | ||

| model loads but can’t train because even batch size of 1 doesn’t fit | OOM | ||

| Trains fine 28 steps take 39min | ~15.1GiB | any input slightly longer will result in OOM on colab | |

| Trains fine 29 steps take 40min | 13GiB but most of the time around 10-11GB | At the same config, we save 2GiB aka 15% memory here. Can be higher for longer sequences |

| Model | GPU | Seq Len | Num Generations | Grad Acc Steps |

|---|---|---|---|---|

| Qwen2.5-14B-Instruct | NVIDIA H100 80GB PCIe | 32,768 | 8 | 4 |

In our collapsible results below, you can see there is a 9GiB difference in the peak memory used (note that 90% of the time, the GPU memory usage is equal to the peak memory in our case). To put things into perspective, using TRL and LoRA we were able to only fine-tune an 8B parameter model with a context length of 1024 at max (32x less). Anything with higher sequence length (with similar configuration) results in the process failing with OOM.

The image below shows how standby compares against non standby training with Unsloth. It is averaged over 3 runs to make sure the metrics aren’t noisy. In fact, if you zoom in close enough, you’d see that enabling standby makes it faster as well, probably due to less memory pressure as discussed before.

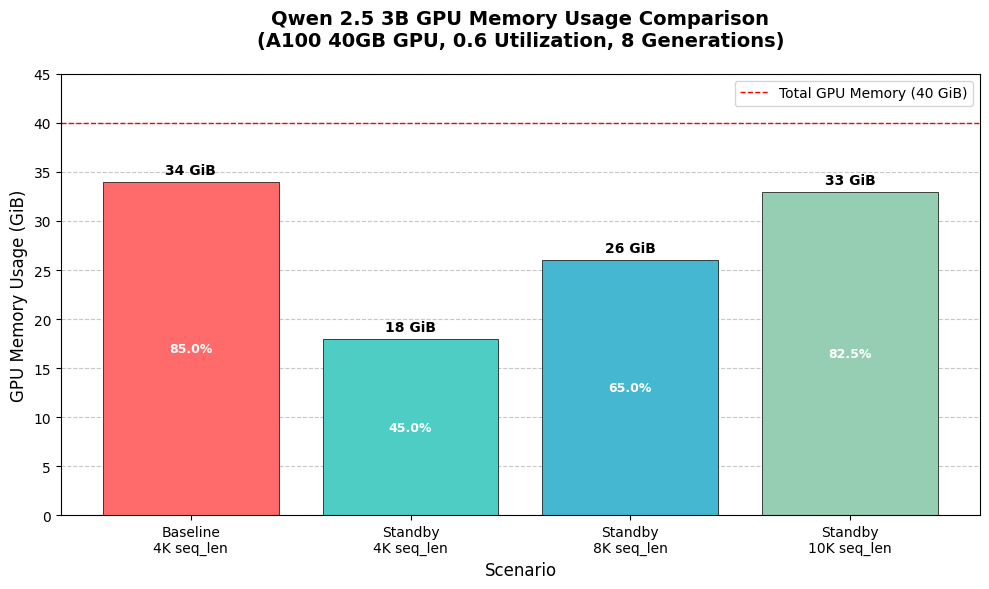

Previous A100 40GB experiments

In our previous experiments on A100 40GB GPU with Qwen-2.5-3b-instruct and 8 generations per sample, we observed that without standby, the GRPO training (model loaded in 16bit, LoRA, only weights trainable), we could only fit 6K sequence lengths. With our standby feature, we were able to fit 10K and beyond! For comparison TRL can only give you context lengths of up to 1K while holding the same batch size.

:tada:Other optimizations

We now select better compilation flags and reduce compile times by 50% or more. We also managed to dynamically patch any vLLM version to handle gc.collect better for backwards compatibility reasons, as inspired from this vLLM pull request. This reduces compilation times from 2 minutes to under 40 seconds.

We also optimized torch.compile flags and tried turning on some flags - unfortunately combo_kernels and multi_kernel could not function correctly on vLLM 0.10 and Torch 2.8/2.9 nightly and coordinate_descent_tuning made autotuning all kernels dramatically slower. It used to compile in under a minute, but enabling it took over 13 minutes and more, with minimal performance gains.

:books:GRPO Notebooks

All our GRPO notebooks have Unsloth Standby on by default and all optimizations! See https://docs.unsloth.ai/get-started/unsloth-notebooks for all our GRPO notebooks, or try the below:

- Qwen3 (4B) - Advanced GRPO LoRA

- DeepSeek-R1-0528-Qwen3 (8B) (for multilingual usecases)

- Gemma 3 (1B)

- Llama 3.2 (3B) - Advanced GRPO LoRA

- Llama 3.1 (8B)

- Phi-4 (14B)

- Mistral v0.3 (7B)

- Qwen2.5 (3B)

Examples:

Example 1 (python):

import os

os.environ["UNSLOTH_VLLM_STANDBY"] = "1"

from unsloth import FastLanguageModel

import torch

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "unsloth/Qwen3-8B-Base",

max_seq_length = 2048, # Can increase for longer reasoning traces

load_in_4bit = False, # False for LoRA 16bit

fast_inference = True,

max_lora_rank = 32, # Larger rank = smarter, but slower

gpu_memory_utilization = 0.95,

)

Example 2 (python):

import os

os.environ["UNSLOTH_VLLM_STANDBY"] = "1"

Example 3 (unknown):

Standy mode enabled:

|===========================================================================|

| PyTorch CUDA memory summary, device ID 0 |

|---------------------------------------------------------------------------|

| CUDA OOMs: 0 | cudaMalloc retries: 0 |

|===========================================================================|

| Metric | Cur Usage | Peak Usage | Tot Alloc | Tot Freed |

|---------------------------------------------------------------------------|

| Allocated memory | 32249 MiB | 43042 MiB | 128336 GiB | 128305 GiB |

| from large pool | 31415 MiB | 42165 MiB | 127204 GiB | 127173 GiB |

| from small pool | 834 MiB | 1184 MiB | 1132 GiB | 1131 GiB |

|---------------------------------------------------------------------------|

| Active memory | 32249 MiB | 43042 MiB | 128336 GiB | 128305 GiB |

| from large pool | 31415 MiB | 42165 MiB | 127204 GiB | 127173 GiB |

| from small pool | 834 MiB | 1184 MiB | 1132 GiB | 1131 GiB |

|---------------------------------------------------------------------------|

| Requested memory | 32199 MiB | 42987 MiB | 128176 GiB | 128145 GiB |

| from large pool | 31364 MiB | 42110 MiB | 127047 GiB | 127016 GiB |

| from small pool | 834 MiB | 1184 MiB | 1129 GiB | 1128 GiB |

|---------------------------------------------------------------------------|

| GPU reserved memory | 37644 MiB | 47504 MiB | 705806 MiB | 668162 MiB |

| from large pool | 36376 MiB | 46588 MiB | 682818 MiB | 646442 MiB |

| from small pool | 1268 MiB | 1284 MiB | 22988 MiB | 21720 MiB |

|---------------------------------------------------------------------------|

| Non-releasable memory | 713142 KiB | 4633 MiB | 103206 GiB | 103205 GiB |

| from large pool | 525312 KiB | 4594 MiB | 101923 GiB | 101922 GiB |

| from small pool | 187830 KiB | 250 MiB | 1283 GiB | 1283 GiB |

|---------------------------------------------------------------------------|

| Allocations | 3460 | 4809 | 15606 K | 15603 K |

| from large pool | 395 | 563 | 2812 K | 2811 K |